LeRobot Studio: a browser for embodied datasets

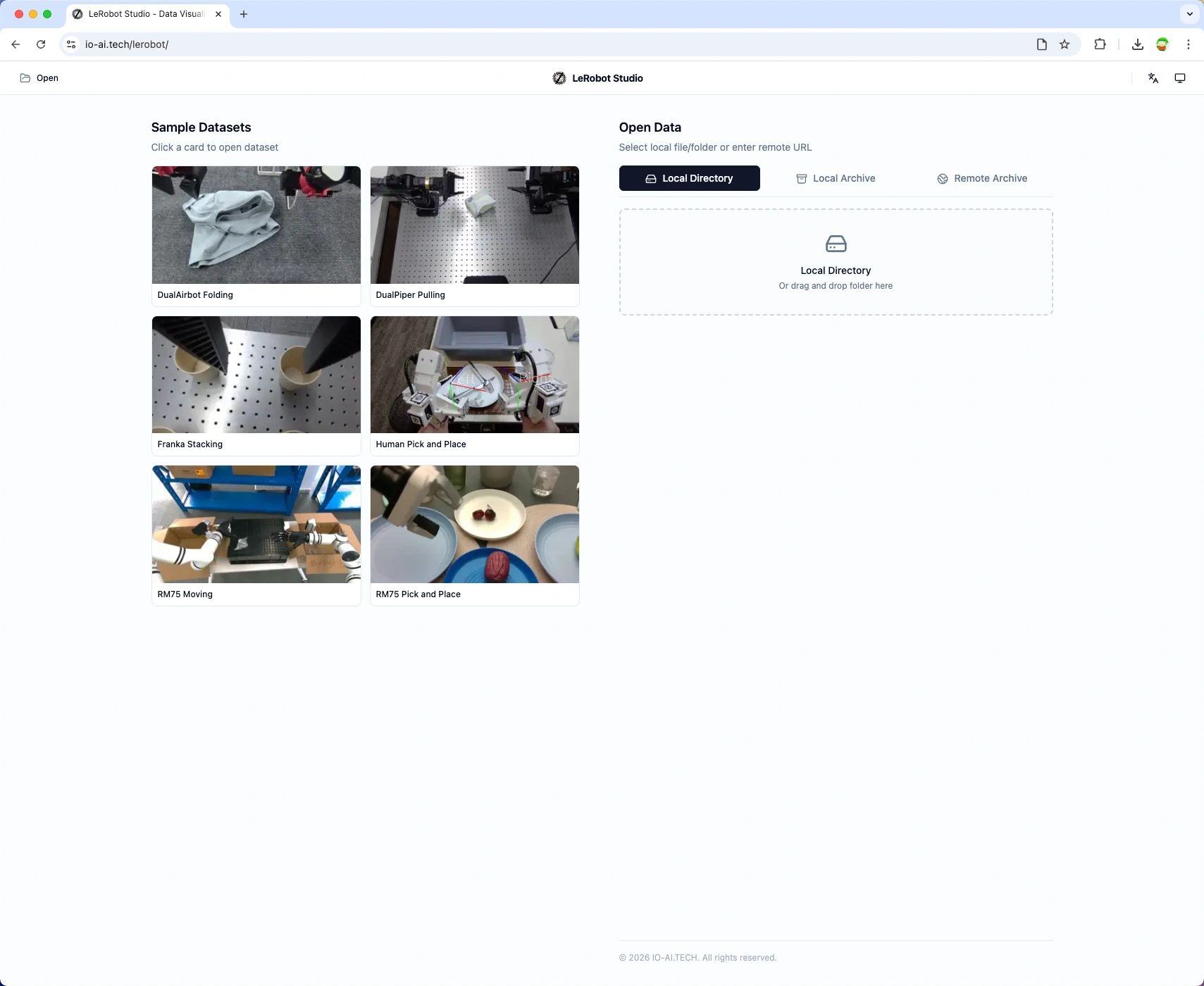

LeRobot Studio (https://io-ai.tech/lerobot/) is a local LeRobot inspection tool built by IO-AI.

Drag a local LeRobot folder or archive into the browser to play it back—data stays on your machine, balancing speed and privacy.

Why the LeRobot format matters

High-quality datasets drive embodied AI, yet robotics data has long suffered from fragmented formats, inefficient storage, and poor reuse.

The LeRobot dataset spec (v2.1 / v3.0) is becoming a de-facto interchange format. Major stacks—including OpenPI and NVIDIA GR00T (Isaac Lab)—support or interoperate with it. It addresses the “data Tower of Babel” with:

- Unified structure — Parquet + MP4 bundles vision, motor state, and actions for cross-platform use.

- Cloud-native workflows — streaming lets you load from the Hugging Face Hub or local storage without downloading everything upfront.

- Community interoperability — standardized layouts improve discoverability, indexing, and sharing.

LeRobot Studio is a general-purpose viewer built on that standard so you can understand and validate datasets quickly.

Core features

LeRobot Studio runs entirely in the browser—no local Python setup required for inspection.

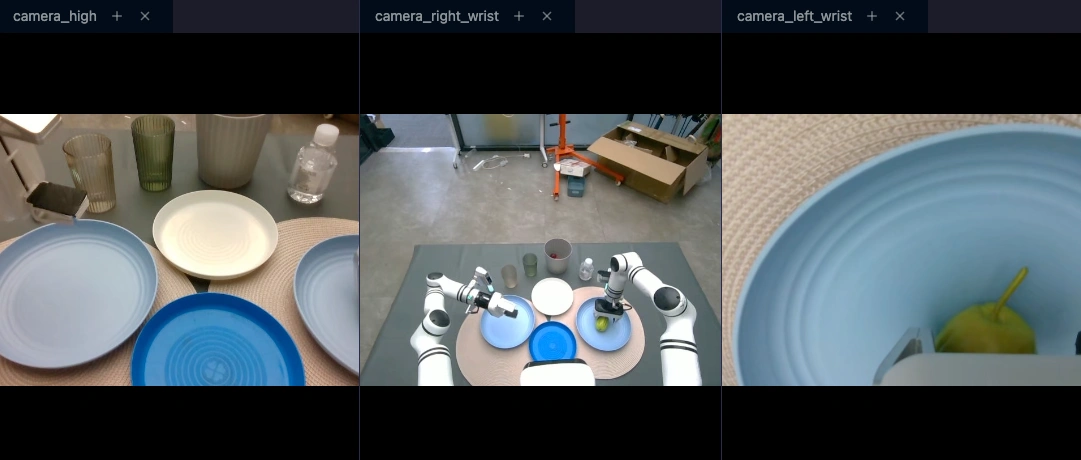

1. Multimodal synchronized playback

Robot logs combine high-rate sensors and variable-FPS video. Studio aligns them on a single timeline so you can see:

- Multi-camera video

- Joint state curves

- Commanded actions

Scrub the timeline to inspect perception and control at any instant.

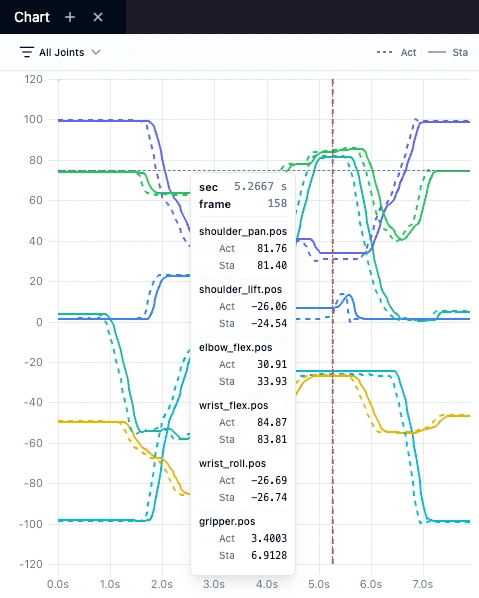

2. Command vs state comparison

During training or debugging, comparing commanded actions to measured state is essential.

- Action: commanded control (dashed in plots).

- State: measured feedback (solid).

The chart view highlights delay, jitter, or bias—useful for spotting hardware issues vs logging bugs.

3. Quick validation and dataset health

Studio loads local folders or .tar.gz archives in-browser (no upload). You can also open remote Hub datasets by URL for quick previews.

After loading, open the Dataset health sidebar to see structural checks (errors / warnings / pass), including:

- Filesystem layout —

meta/info.json,meta/episodes,data/,videos/for v2.1 or v3.0 - Metadata — required fields such as

fps,codebase_version - Features — dtypes and shapes

- Episodes — counts, lengths, zero-length segments

Issues are listed explicitly so you can confirm exports before training.

How to access it

Option A — IO-AI website

- No login required.

- Best for quick local inspection and demo datasets.

Option B — IO-AI data platform

- Integrated with dataset management workflows.

- Best after capture, labeling, or conversion when you want QA inside the platform.

UI tour

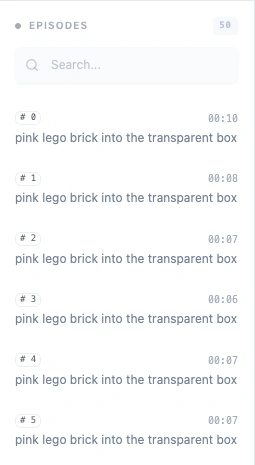

Episode sidebar

Browse and search episodes with metadata to jump to interesting segments.

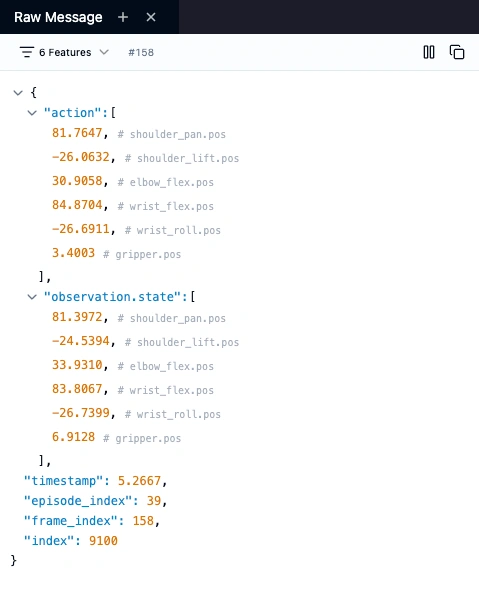

Raw data panel

Inspect the per-frame JSON payload—useful for copying timestamp, action, or state values into debugging scripts.

Playback controls

Frame-accurate controls including frame stepping for analyzing grasp or insertion phases.

Dataset health panel

Open Dataset health to review automatic validation results (pass / warning / error) covering v2.1/v3.0 structure, meta/info, features, and episodes.

About IO-AI

IO-AI (io-ai.tech) builds infrastructure for embodied intelligence. LeRobot Studio is part of our data toolchain to tighten the data loop.

- Website: https://io-ai.tech

- Data platform: https://open.platform.io-ai.tech

- Official LeRobot docs: https://huggingface.co/docs/lerobot