Data QC

How can large volumes of ROS recordings be checked automatically and consistently before training and export—against baselines like frame rate, sensor topics, and time sync?

Typical needs

- Batch-filter data with low FPS, missing critical topics, or bad timestamps before training.

- Different thresholds per project, plus one global “hard floor” for the whole platform.

- After rule changes, re-run QC on historical data so conclusions match the latest rules.

- Manual overrides when a rule is right but a sample is exceptional, with an audit trail.

The Data QC module uses QC rules + background jobs: the platform scans a structured report per ROS recording, evaluates your UI assertions, and shows pass/fail on each dataset.

- Data QC (this page): Rule-based checks on ROS trajectory / ROS recordings (MCAP, bag, db3, etc.)—numeric thresholds, required/forbidden topics—tied to preprocessing, export, and training.

- Video QC: Multi-stream Doctor diagnostics (drops, artifacts, BRISQUE)—see Video QC.

Core concepts

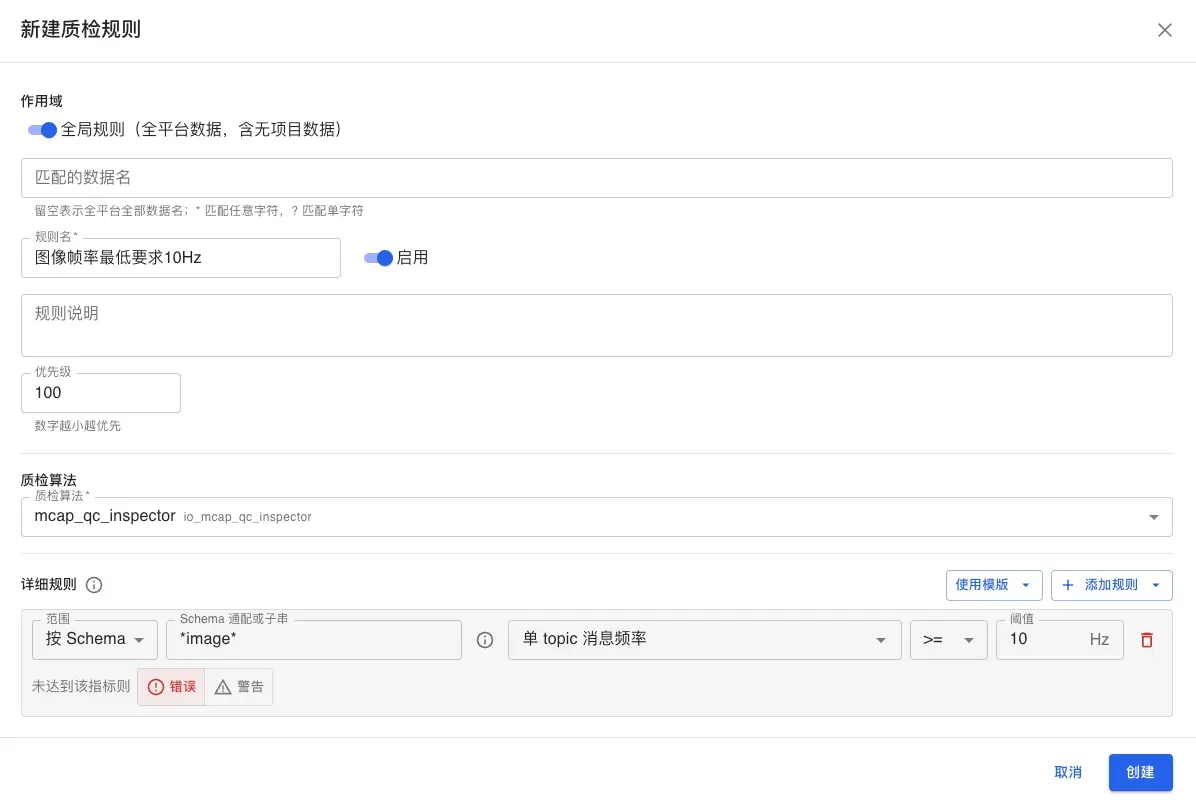

QC rules

Each rule binds one QC algorithm (ROS recording inspection; MCAP reports are primary today; other containers/formats are rolling out), a scope, and several assertions.

| Concept | Description |

|---|---|

| Scope | Project rule: only datasets in that project. Global rule: all ROS recordings on the platform (including unassigned). Datasets in a project match both global and project rules; each produces its own runs. |

| Dataset name match | Glob on dataset.name (e.g. *arm*); blank means all names in that scope. |

| Priority | Lower number runs first (display and scheduling); rules still judge independently when both match. |

| Enabled | Disabled rules do not match or auto-queue; enabling can trigger backfill on history. |

Assertion types

Assertions are the conditions inside a rule:

- Numeric threshold: Comparator + threshold on a report metric (e.g.

frame_rate >= 20); scope can limit to whole file or topic patterns (see UI for options). - Required topic: Topic must appear in the report or the run fails.

- Forbidden topic: Topic must not appear or the run fails.

Severity: error (shown as blocking / fail in the UI) fails the run when violated; warning is informational and does not by itself fail the run—handy to observe before tightening.

Permissions

| Action | Admin | Project manager | Other roles |

|---|---|---|---|

| View QC results / logs | Yes | Yes (within data access) | Collectors often see their own data |

| Edit project QC rules | Yes | Yes (in project or public project) | Usually no |

| Edit global QC rules | Yes | No | No |

Only admins can create, edit, delete, or duplicate global rules; others may see them read-only.

Flow overview

When QC runs automatically

- After preprocessing: When ROS recording preprocessing finishes, the platform enqueues QC for datasets that match enabled rules (no need to click each row). Today auto-queue is still MCAP-first; bag / db3 alignment with reports and rules is rolling out—follow release notes.

- On rule create/change: Saving or enabling a rule can asynchronously backfill matching historical data (large queues may take time).

- Manual: From a dataset’s QC entry points you can trigger a run (exact button names follow the UI).

Data in unsupported formats or not yet preprocessed may not enter the auto QC queue.

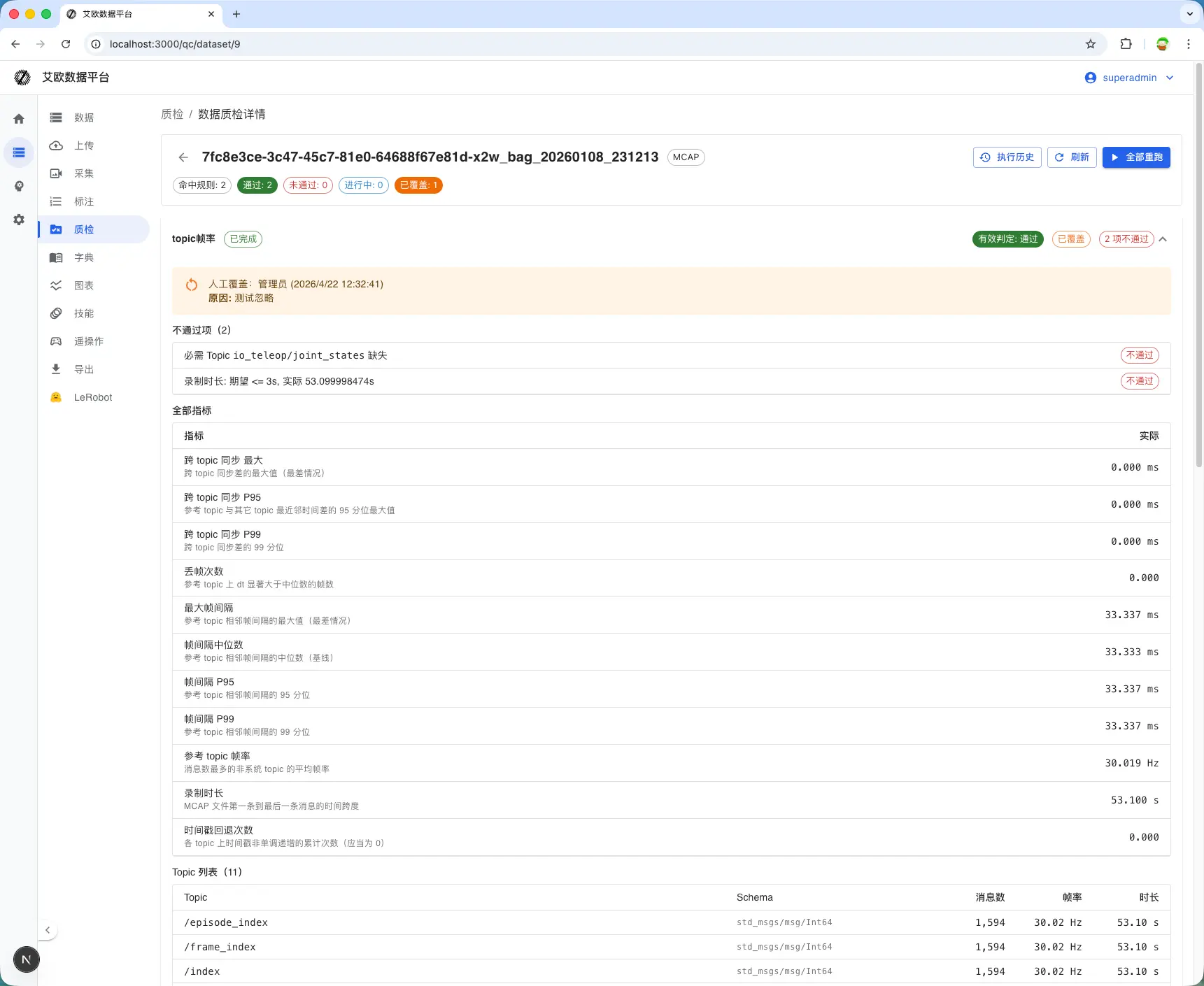

Reading results

- Summary: On list or detail, see how many rules apply and pass / fail / running (exact chips follow the UI).

- Single run: Each rule has its own run with metrics and failed assertion details.

- System tags: Failures may add tags such as

qc:failedfor filtering; export blocking depends on platform policy.

Manual overrides

When a rule is generally right but one sample is special (e.g. deliberate low FPS, later fixed in post), you can override that run (pass/fail + reason). Overrides feed the effective verdict and tie into tags and export logic.

Screenshots

Rule list

Create / edit rule

QC on dataset detail